Weekly Metaverse #122: How AI will change the way we interact with the digital world

Making the metaverse accessible, no controllers needed

The Metaverse in the Real World

I know I wrote about AR last week, but I’ve seen a couple of things this week that I think are neat, so allow me to share and opine a bit. After all, if you can’t opine in your own newsletter, where can you opine, y’know?

First, there’s this really fantastic AR overlay called PsyGan AR, created by Sean Simon. Just give it a watch - it’s a quick video and it feels like a real glimpse into the future. I expect that one day, we’ll all have AR glasses/contacts modifying the world around us - maybe it’ll be a little bit, or maybe we’ll all be in our own, stylized versions of reality. (Speaking of which, Michigan’s Augmented Reality Mural Festival is a great example of AR enhancing the physical world).

And second, there’s Ford with AR headlights in its cars - rather than project information onto your eye (or even onto a HUD on your windshield), it projects it onto the road in front of you via the headlights.

This has a few big benefits. First, you’re already looking at the road in front of you anyway (or at least you should be), so there’s no need to move the focus of your eyes elsewhere. Second, it can directly show you information relating to the physical world around you. Wondering how tight of a squeeze it’ll be to fit into that parking spot in front of you? See an image of your car’s width projected on it. It’s also not hard to imagine additional projectors being placed on other parts of the car to provide features like parallel parking assistance. Third, other people can see what your car projects. This could be useful for things like signaling to pedestrians in front of the car that you’re braking and will come to a full stop before the crosswalk.

It’s a neat idea, though as with any new car-related technology, it’d need to pass muster with regulatory agencies before hitting the road, so don’t expect to see it anytime soon. Still, it’s a good reminder that augmented reality doesn’t just mean glasses - it’s the digital world interacting with the physical one, and there are many forms that can take.

AI in the Metaverse

When I consider what the metaverse is, I don’t think that AI is an inherent part of it. I see the metaverse more as a spatial, immersive internet that integrates the physical world with the digital world. You can have any number of amazing metaversal AR and VR experiences with no AI involvement.

That said, I think the two topics are destined to become indelibly intertwined, in that AI will enable a change in how we interact with the digital world that’s happening in parallel with the rise of the metaverse. From creating digital spaces to enabling useful commercial interactions, AI will ultimately underscore most of our digital lives.

There are obviously an endless number of places where AI will work for (with?) us, but I think the use case that people will become the most accustomed to is using an AI personal assistant to interact with the metaverse.

Right now, when you pop on your VR headset, you have to wave around controllers and press buttons to get where you want - it’s decidedly un-futuristic and can be quite awkward and unnatural, and it certainly breaks the feeling of immersion. Now replace that with an AI assistant. If you’ve just heard about Beat Saber, you just say, “Download Beat Saber,” and it lets you know the price, asks for confirmation that you’re willing to pay it, and upon receiving that confirmation, authorizes a credit card charge and downloads the app.

It’s a simple use case, but it requires an understanding of a few different things, plus it requires the AI to take a few different steps, knowing when to get confirmation along the way.

Once the app’s downloaded, you can ask your AI assistant to start it and play a song you’d like at a difficulty level that’s challenging but not too hard. On top of parsing the language of your request, your AI now has to pull information about what music you like (say, by looking at your Spotify history and comparing it to available tracks on Beat Saber) and your level of skill at dexterity-based video games.

Consider how much lower the barrier to entry into the metaverse will be when you can just pop on a headset (or put in your AR contacts) and tell your AI assistant what you want. Eventually it’ll get to the point where you can sit in your metaverse office and plan a vacation - “I want to go to a big city that’s got great nightlife but is also affordable and not more than an eight hour plane flight.” Not a problem. “Find me a hotel with a pool that’s similar to the one I stayed in when I went to Lima last month.” And so forth and so on - you get the point.

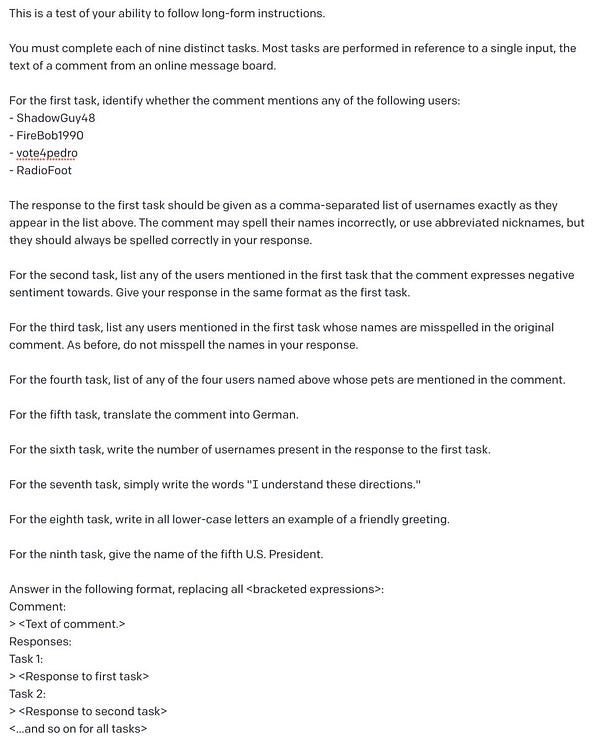

Anyway, the impetus for all of this is these couple of Tweets - first, this thread of highly complex, multi-step requests to GPT-3:

That’s just bananas to me! It really is. I don’t really know where you draw the line between truly “understanding” requests and general complex language modeling, but I suppose the difference between those things matters a lot less than the capabilities of our AI tools.

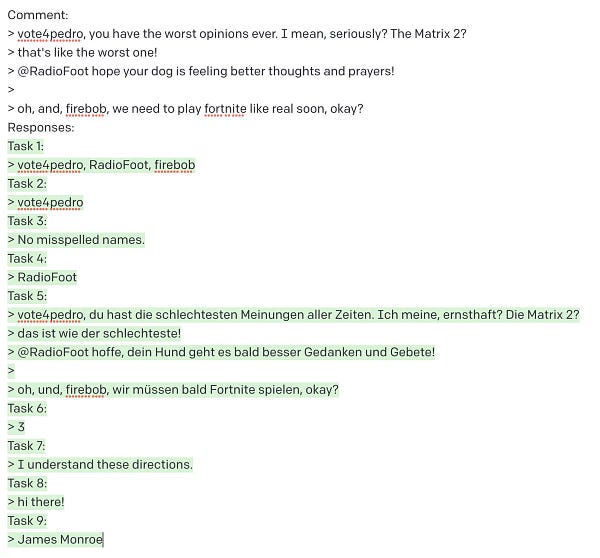

And to follow that, we’ve got Robert Scoble Tweeting:

I genuinely can’t wait to see what it can do. Between the “understanding” we see here and the creative power of tools like DALL-E, it seems clear that we’re on the precipice of big changes to how we interact with the digital world. Ultimately, I think people will remember the shift to the metaverse not only by the move to AR and VR, but also by how we changed from interacting with computers, via rote, typed instructions that had to be formatted in precise ways to just talking to them the way we’d talk to another human (and actually being understood).

The future is bonkers, man. Anyway, here’s some news from the last week for you. We’re going to try doing away with the categories this week.

Mark Zuckerberg responds to metaverse memes with a redesign: Meta launched Horizon Worlds in France and Spain, people criticized the fact that the whole thing looks like a ‘90s cartoon (ReBoot, anyone?), and Mark said something along the lines of “nuh-uh, our metaverse is gonna look super sweet, we just we’re trying to make it look cool in the screenshots!”

Virtual pop star Polar takes over metaverse, sets sights on potential real-world gigs: Abba recently performed in hologram form, and if they can do that, there’s no reason a digital pop star can’t perform in a physical arena. The walls between the digital and physical worlds are beginning to crumble…

Metaverse Safety Week Aims To Safeguard The Virtual World: Good to see that there are folks focused on safety.

Modulate gets $30M to detox game voice chat with AI: Speaking of safety, this kind of thing will become important in much more than just games. I just hope it doesn’t hear me shouting at my dog that she’s being a dumbass and ban me…

USC film school gets immersive storytelling studio: Investing in the future of storytelling.

Learn how to write an article about the metaverse in 9 minutes: A critical read for those of you who wish to join me among the exclusive ranks of dudes who write about metaverse stuff.